How to Rank in Google AI Mode: The 2026 Strategy Guide

Search used to be predictable. You typed a few words, scrolled through ten blue links, clicked the best one. That era’s not gone, but it doesn’t define the experience anymore. Today, lots of searches start as conversations. And increasingly they end inside an answer you never have to click away from. The shift matters because the game moved from ranking position to citation inclusion. From being the top link to being the trusted source the AI uses when it composes an answer.

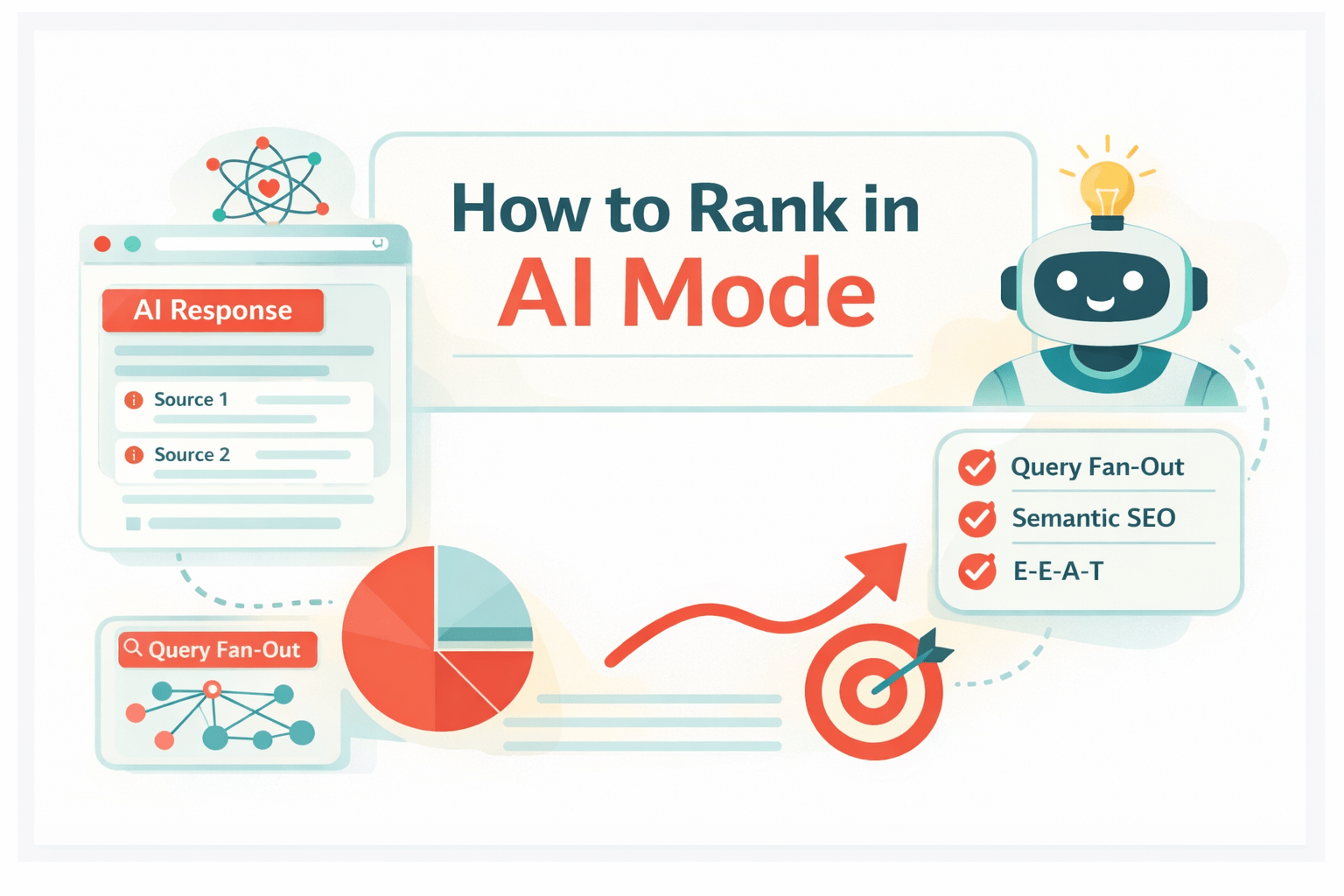

Google AI Mode is the new opt-in conversational search surface powered by Gemini. It breaks complex prompts into follow-up questions, pulls in live web results, Knowledge Graph facts, specialized graphs like Shopping, then returns one coherent response that usually includes citations back to the web. Different set of ranking signals. Different approaches for content and technical SEO. (blog.google)

Search isn’t just about matching keywords anymore. It’s about answering layered intent through conversation. This guide shows you how to map content to the hidden questions the AI asks, how to structure passages the AI can extract with confidence, and how to give the machine the signals it needs to treat your brand as a trusted source.

Key takeaways

- Google AI Mode is an opt-in, conversational search surface powered by Gemini that shifts ranking dynamics from traditional positions to citation inclusion within synthesized answers.

- The ‘Query Fan-Out’ mechanism breaks complex prompts into latent sub-queries, meaning content needs to answer implied questions rather than just match a single keyword. (developers.google.com)

- Semantic Completeness is a critical ranking factor. Content needs self-contained passages that the AI can extract directly to assemble high-confidence answers.

- Use Reverse Question Answering prompts to audit pages, find gaps, and make sure your pages address the specific hidden questions the AI generates.

What is Google AI Mode?

Google AI Mode is a dedicated conversational search surface you can opt into when using Google Search. It’s powered by Gemini, designed for multi-turn interactions so users can refine queries, ask follow-ups, get deeper syntheses. Unlike AI Overviews (which appear as single-turn summaries within the standard results page), AI Mode gives you a chat-like experience and often provides richer, multimodal responses.

Under the hood, AI Mode synthesizes three major information sources: the live web, Knowledge Graph facts, and specialized Graphs like Shopping. It runs tons of internal queries to collect precise facts, then composes a single answer that references different pages and data sets. The practical impact? Placement in a numbered list matters less than whether the AI will cite your page when answering a user. The priority becomes being the factual, self-contained passage the model can quote or base its reasoning on. (developers.google.com)

| Factor | AI Overviews | Google AI Mode |

|---|---|---|

| User interface | Single-turn summary on SERP | Multi-turn conversational interface (tab or chat) |

| Interaction style | Read-only, limited follow-ups | Full conversation, follow-ups, clarifying prompts |

| Model | Overview-level SGE models | Gemini-based, multimodal, higher reasoning power |

| Query complexity | Short to medium prompts | Handles complex, multi-criteria prompts with deep reasoning |

| Source use | Concise synthesis of web results | Parallel sub-queries, Knowledge Graph, Shopping Graph integration |

How AI Mode works: the power of Query Fan-Out

At its core, AI Mode relies on a process known as Query Fan-Out. Instead of treating a user prompt as one single retrieval target, the system decomposes it into many latent sub-queries, issues them in parallel, and aggregates the results. The fan-out step isn’t elasticity for its own sake. It’s about precision. By breaking a question into components, the AI can find targeted facts, compare them, assemble a more complete answer.

Think of a complex user prompt as a set of needs hidden inside a single sentence. The AI will infer those needs, convert them into sub-queries, and search for specific facts. Those sub-queries could be surface-level queries about definitions, procedural steps, product specs, or evidence checks against a Knowledge Graph. Each sub-query returns the best supporting passages from the indexed web, then the model evaluates and synthesizes those passages into a single narrative. Net effect: a single answer that draws on multiple specialist pages.

For SEOs the implication is straightforward but not obvious at first glance. Ranking’s no longer all about the page as a whole. It’s about the passages inside the page. The AI will choose the passage or passages that best answer each sub-query. So your content needs to be chunked into self-contained, factual units that map cleanly to likely sub-questions. If your page buries the answer inside a long meandering paragraph, the AI may skip it for a competitor with a crisp, extractable passage.

What are latent questions?

Latent questions are the implied, unstated needs behind a user’s search. They’re what the AI infers when it decides to fan out. For example, a user who asks “best running shoes” may implicitly need answers to several secondary questions. Covering those angles is how your content matches the specific sub-queries the AI generates.

Examples of latent questions:

- What’s the runner’s foot type and arch support needed?

- How durable are these shoes under heavy weekly mileage?

- What price ranges exist for comparable models?

- How do these shoes perform in different weather conditions?

- Are there return policies and warranty differences to consider?

Answering these explicitly, in self-contained passages, gives the AI multiple grab points to cite from your page.

Example of Query Fan-Out in action

Imagine the user prompt: “How to make pizza dough.” AI Mode might fan out into these sub-queries:

- What temperature should yeast be activated at?

- Which types of flour affect texture and gluten development?

- How long should dough be kneaded by hand and by machine?

- What hydration percentage yields a Neapolitan-style crust?

- How long does the first and second proof take, and under what humidity?

- What are common troubleshooting tips for dense dough?

The model searches sources that cover each point, extracts concise passages, and synthesizes an answer that cites a mixture of recipe pages, food science references, and forum troubleshooting threads. If your page contains precise, extractable passages for the yeast temperature, flour types, and proofing times, the AI can pull those directly and cite you in the final response. If your content only mentions “use good yeast” and leaves the rest vague, you miss the chance to be cited.

Strategy 1: identify and answer latent questions

Stop thinking in headline keywords. Start mapping topics into question trees. Topic Mapping means listing the head query and then building the network of follow-ups and edge cases the AI will probably ask. This moves you from shallow coverage to the depth AI Mode expects.

How do you find those latent questions? Start with Reverse Question Answering. Take a top-ranking page for the head query, feed the page text into a large model, and ask it to list the explicit questions the page actually answers. The difference between what the page claims to cover and what it actually answers is where most opportunities live.

The practical steps:

- Pick your target page and paste the main body into a prompt.

- Ask the model to list exact, short-form questions that the page provides a direct, complete answer to.

- Compare the list to your topic map, and flag gaps where your page needs more self-contained passages.

- Run the same prompt on competitor pages to find valuable sub-queries they cover and you don’t.

Using this method you can prioritize which latent questions to add, and which to fold into the page as semantic units.

The Reverse Question Answering prompt

“Analyze the following text and list the specific questions it provides a direct, complete answer to. For each question, give the sentence or paragraph that contains the direct answer, and note any implied follow-up questions that the text doesn’t answer.”

Run that against your own pages, and against the pages that are already being cited by AI Overviews or AI Mode. The output shows where your content’s focused, and where it’s fluffy.

Mapping the information journey

Look at the user’s journey from a head query to the follow-ups. People Also Ask, Related Searches, and user forums are good starting points. Group connected questions into logical clusters and put them on a single comprehensive page when possible. AI Mode prefers pages that keep related semantic units together because that reduces the friction of stitching content from multiple pages into one synthesized answer.

Group questions like:

- Definitions and quick answers, for users who want a short response.

- Procedural steps with exact parameters, for how-to queries.

- Troubleshooting and edge cases, for follow-up prompts.

- Comparison tables and trade-offs, for decision-making queries.

Put each in its own clearly labeled section so the AI can extract one passage at a time.

Strategy 2: structure content for Semantic Completeness

Semantic Completeness means every content block should be able to stand on its own, answering a single question fully and precisely. AI extractors pick passages that are self-contained. If your page has many such passages, the system can pull several and assemble a confident answer without needing to reference other parts of the page.

Why does this matter? The AI’s optimizing for confidence. It prefers passages that contain definitions, exact values, or stepwise instructions in a compact unit, because those reduce ambiguity. Your goal is to create those compact units intentionally.

How to do it:

- Use question-style H2 and H3 headings that mirror user queries.

- Put the direct, concise answer in the first 1 to 3 sentences after the heading.

- Follow with context and evidence, but keep the core answer extractable.

- Use bullets, numbered lists, and short paragraphs to create natural extraction points.

The optimal passage length

Data from recent passage-optimization analysis suggests that extractable answer passages usually fall into predictable ranges: the direct answer often sits around 40 to 60 words, while the full contextual passage that gives the model enough support can be 130 to 170 words. That structure lets the AI pull the short answer for a quick response, while also having longer context to verify and support the statement. Aim to place the core answer immediately after the heading so the model doesn’t have to search through unrelated content to find it.

Don’t bury the lead. If the first paragraph after a header’s fluffy, the AI will skip it for a competitor that places the answer first.

Using question-based headings

Before: “Benefits”

After: “What are the benefits of X for small businesses?”

Question-based headings do two jobs. They signal to humans what the section answers, and they create a direct semantic match for the AI’s latent sub-queries. As you audit existing content, rename vague headers into specific questions and move the concise answer up front.

Strategy 3: leverage multimodal content

Gemini and AI Mode are multimodal by design, meaning they can understand images, text, and video. Pages that include well-annotated visuals and high-quality videos get an advantage because the AI can use visual evidence to corroborate textual claims. That makes a difference for how often a page gets selected to support an AI answer.

Practical types of media to include:

- Original photos or diagrams that show product details or process steps.

- Short how-to videos with clear, chaptered timestamps and accompanying transcripts.

- Comparison charts embedded as HTML or styled lists, not as flattened images alone.

- Alt text and captions that explain what the visual shows in plain language.

Sites that lean on generic stock images, or that hide important information inside an unindexed PDF, make it harder for the AI to verify claims, and so they reduce their selection probability. There’s evidence that pages with images and videos see materially higher selection rates in AI-driven results, so invest in original visual assets where it matters.

Optimizing images for AI context

- Use descriptive, human-readable filenames, not generic strings.

- Write alt text that reads like a direct caption, answering what the image shows and why it matters.

- Place a short caption immediately beneath the image that includes the key data points or callouts.

- Prefer original photos or screenshots for unique steps or product details.

The power of video transcripts

AI can ingest video content, but it relies heavily on transcripts and captions to find precise statements to quote. For how-to and explainer videos, include a full transcript on the page, plus a short, titled summary with timestamps. If you host on YouTube, populate the description with a clean summary and chapter markers. In many cases YouTube videos still get premium selection rates for “how-to” style queries, so embedding and transcribing those videos can boost your chances of being cited.

Strategy 4: Implement technical SEO for AI

AI depends on the crawlable index, and on well-structured signals. If Google can’t crawl or understand your content, AI Mode can’t use it. That’s why technical SEO remains foundational. A clean index, fast pages, and clear structured data make it far easier for the AI to find and trust your passages.

Focus areas:

- Crawlability: make sure pages are reachable from sitemaps and internal links, and that robots.txt doesn’t block important sections.

- Indexing: check Search Console for indexed pages, and fix canonicalization issues that hide content behind noindex tags.

- Performance: mobile friendliness and Core Web Vitals still matter as tie-breakers for user experience based ranking decisions.

- Structured data: use schema to describe the page as an Article, HowTo, Product, or FAQ when appropriate, so machines can parse and map facts cleanly.

Note: while we don’t include executable snippets here, implement JSON-LD for HowTo, FAQPage, and Article markup where applicable. That markup helps machines identify the semantic role of each passage and makes extraction more reliable.

Essential schema types

- FAQPage: useful for grouping short Q&A passages that are naturally extractable.

- Article: for long-form content, helps identify headline, author, and publishing date.

- HowTo: critical for step-by-step content because it defines steps as discrete, machine-readable items.

- Product: for commerce pages, provides structured specs that support Shopping Graph integration.

- Organization: helps establish brand identity and feeds the Knowledge Graph signal.

Making sure crawlability and indexing work

If Google can’t crawl it, AI can’t see it. Quick checklist:

- Check robots.txt and make sure nothing important’s blocked.

- Submit and verify sitemaps in Search Console.

- Confirm canonical tags point to the preferred version of the content.

- Make sure pages are mobile-responsive and meet Core Web Vitals thresholds.

Strategy 5: Build authority and E-E-A-T

Even with perfect structure and technical signals, the AI prefers high-confidence sources. That’s where E-E-A-T matters: Experience, Expertise, Authoritativeness, Trustworthiness. For AI Mode, these signals show up as explicit author bios, brand presence across the web, and a consensus of citations or mentions that support factual claims.

E-E-A-T isn’t a checklist you complete once. It’s an ecosystem you build over time. Author profiles with clear credentials and links to professional pages help the AI verify expertise. A track record of accurate, well-cited content helps the model treat your page as high-confidence. Off-page signals like mentions in news outlets, industry reports, and trusted forums also feed entity authority and knowledge graph formation.

A short trust checklist to implement on priority pages:

- Clear author bios with relevant credentials and links to LinkedIn or institutional profiles.

- Editorial standards or an “about our process” note explaining fact-checking and sourcing.

- Inline citations or links for statistics and claims, enabling human and machine verification.

- Privacy policy and contact pages that show site legitimacy.

Cultivating brand mentions

The web is the machine’s consensus. Digital PR is how you earn those mentions. Focus on getting quoted in industry roundups, participating in expert interviews, and contributing data to public reports. Even unlinked mentions can be signals for entity recognition, but linked mentions are easier for machines to validate quickly.

Showcasing author expertise

Authors matter. Use detailed bios that state credentials and real-world experience. For technical topics include one-sentence context like, “I’ve worked as an R&D engineer on polymer blends for 8 years,” rather than vague claims. These specifics help the AI connect the author to the content topic when it’s evaluating E-E-A-T.

Measuring success in AI Mode

Tracking AI-driven visibility is messy right now. Google Search Console has been evolving to surface AI-specific appearances, but rollout varies and many AI impressions are still aggregated under broader “Web” categories. Expect some latency in reporting, and treat raw GSC data as directionally useful rather than exact.

Practical metrics to watch:

- Impression trends for target queries, especially spikes after you publish deep, semantically complete pages.

- Brand visibility, measured by impressions of the brand name within search results and conversational responses.

- Clickthrough behavior, because even if AI answers reduce clicks, the value of being the cited source is brand awareness and downstream conversions from branded searches.

Use a combined view: Search Console for impressions, internal analytics for downstream behavior, and qualitative checks where you run sample prompts in AI Mode to see whether your pages are being cited.

Conclusion

AI Mode is an opportunity and a test. The opportunity is to be the concise, confident source that powers machine answers. The test is whether your content’s precise, structured, and trustworthy enough for the AI to rely on. Focus on three things: map and answer the latent questions the model will fan out to, craft semantically complete passages that are easy to extract, and build the brand and author signals that raise your confidence score.

Start by auditing your top traffic pages using the Reverse Question Answering prompt. Rename headers as questions, move direct answers to the top of each section, and add the specific data points an AI would want to cite. Over time, that work changes the probability that the next conversation in Google AI Mode will include your site as a trusted citation.

So where does this leave us? The future of search is conversational. Optimize for query fan-out, semantic completeness, and E-E-A-T, and you’re not just optimizing for rankings. You’re optimizing to be part of the answer.

FAQs

1. How to rank on Google AI mode?

Ranking in AI Mode’s less about positions and more about being included as a cited source. Focus on creating semantically complete passages that answer implied sub-questions, use clear question-style headings, include original visuals and transcripts, and make sure your pages are crawlable and marked up with appropriate schema. Also build author credibility and brand mentions so the AI treats your content as high-confidence.

2. How to get ranked in AI?

Do the work of topic mapping. Identify the latent questions the AI will fan out to, and answer each with a self-contained passage on the page. Use structured data like HowTo or FAQPage where relevant, optimize images and include transcripts for video, and earn third-party mentions to strengthen your brand authority.

3. How does Google rank AI content?

Google ranks content for AI Mode by evaluating extractable passages that match sub-queries generated during query fan-out, the credibility signals around those passages, and the technical accessibility of the content. The process leans on model confidence, semantic alignment, and entity-level signals rather than simple keyword density.

4. Can you rank with AI content?

Yes, but not by publishing AI-generated fluff. If you use AI to draft content, make sure it’s accurate, specific, and edited by humans who have the underlying knowledge. The AI will favor factual, verifiable passages and trusted authors, so human oversight and domain expertise are still the differentiators.

5. What’s the difference between Google AI Mode and AI Overviews?

AI Overviews are single-turn summaries that appear directly on the search results page, while Google AI Mode is a dedicated conversational interface that supports multi-turn dialogs and deeper, multimodal reasoning. AI Mode uses a more advanced version of Gemini and supports follow-ups and richer integrations.

6. How does the Query Fan-Out mechanism affect SEO?

Query Fan-Out means the model will break a head query into many targeted sub-queries. SEO needs to shift from broad page-level optimization to fine-grained passage-level coverage that answers those sub-queries. Pages that are chunked into extractable, factual units win more often.

7. Why is semantic completeness important for AI rankings?

Semantic completeness gives the model what it needs in a single extraction, reducing ambiguity and improving confidence. Self-contained passages make it easy for the AI to select and cite your content when composing answers, which increases your chance of being surfaced in AI Mode.

Co-Founder & Visionary Architect

An IIT Delhi alumnus with a Master’s in Artificial Intelligence, Ravi Soni is a pioneer in building AI-native brand ecosystems. With over 12 years of expertise, he has scaled Obbserv Group into a 150-member powerhouse, driving exponential growth for global giants including Amazon, Swiggy, the World Bank, and Y Combinator-funded startups.

Ravi is the architect behind the 3C Framework (Create, Converse, Command) and the TEO Wheel methodology—frameworks he has shared at premier forums like IIM Ahmedabad and IIT Delhi. Through his ventures—Obbserv AI, O+io, and SCRUB—he bridges the gap between deep-tech AI and market dominance. From hyper-realistic generative content to advanced GEO (Generative Engine Optimization) and AI-driven reputation healing, Ravi empowers brands to move beyond traditional marketing into a future of precision, personalization, and ad-free exponential growth.